Reporting and Read-Out Phases: Demonstrate Your Pentest’s Value

Hack Your Pentesting Routine

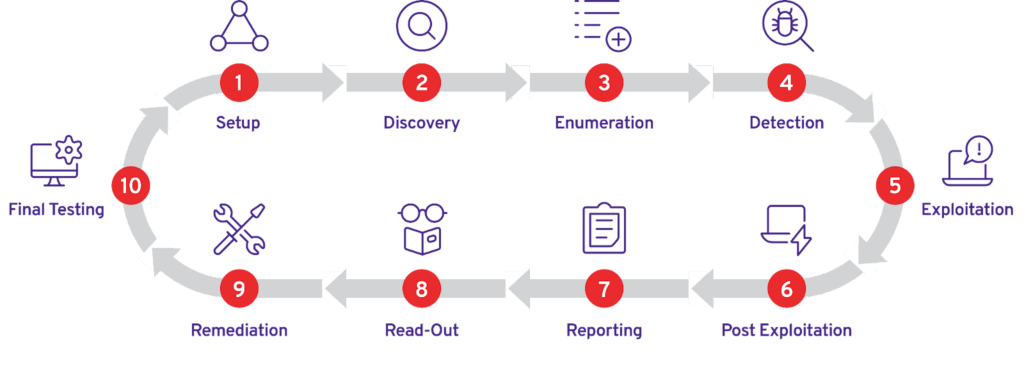

In this series, we’re discussing the ten key phases of a penetration test, talking about the serious pain points in each, and demonstrating how PlexTrac can eliminate these problems.

In this approach, there are 10 phases of the penetration test engagement, each defined by a different group of stakeholders, participants or activities requiring a serious context shift.

Check out the complete discussion of the pentesting cycle in our “Introducing ALL the Phases of Pentesting” article.

Want to learn more about how PlexTrac can transform your pentesting practice today? Request a demo.

Penetration Testing Phases Seven and Eight

Our next phases of the pentest include

- Reporting

- Read-Out

These two phases, especially reporting, can be considered monotonous or tedious; however, they are some of the most important and impactful pieces of the security assessment and testing puzzle. When teams are receiving the end-result of a security engagement — be it a penetration test, red teaming, purple teaming or vulnerability assessment — the results of the activity is the primary focus point. Presenting clear, actionable results from an engagement, regardless of whether it’s a snapshot in time assessment or one that follows a continuous cadence, is paramount. The role that offensive security focused assessment and testing activity plays within the technology ecosystem is to ensure that the people, processes, and investment organizations have placed into their cybersecurity teams and programs is operating effectively and efficiently. Without clear, accurate assessment output there is no way to measure the areas of the organizations that underwent the assessment.

It doesn’t matter if you employ the brightest hackers or have top-notch security engineers. If you cannot communicate the results of your assessment and testing activity meaningfully so that organizations can take action, collaborate, mitigate flaws, and strengthen their security posture, you’re not providing the appropriate value.

Reporting and Read-Out: Tools of the Trade

The reason these phases are broken out is because that is the typical flow seen during assessments, generally. Many times a report is delivered for engagement activity, then after the data is observed, the recipients can begin to investigate, prepare questions, and mitigate findings. After having time to digest the results, the recipients of the assessments will typically meet with the assessors, and spend time walking through the data, colloquially known as “the read-out.”

This flow may vary depending on the organization, some do reporting piecemeal and engage teams during testing, some hold final data until it’s been verified and curated. Regardless of the flow, it’s still typical for a final report to be delivered in a “complete” state, and have the individuals who created the data present it to the recipients.

Because the Microsoft Office suite is ubiquitous in many professional organizations it is not uncommon for teams to try to leverage software like PowerPoint, Word, and Excel to present the results of findings, as a final report deliverable. Converting documents into PDF is also common, because PDF reader software is free, and most web browsers can open PDF’s. The key is how to get the data into a final deliverable format.

Some tools and techniques include the following:

- Manual Hand-Jamming — Taking the output from various assessment and testing activity — perhaps from tools, scripts, command terminals — and manually copying the data over into a report document.

- DIY Reporting Solution — Using a home-grown application to consume the raw outputs from assessment and testing, including manual activity and assessment tool output, and wrapping the results with a narrative that provides context. The application typically generates a deliverable, or in some cases can be used to present the results.

- Open-Source Reporting Solution — Very similar to the DIY solution above, this application or set of tools is shared publicly and usually not developed by the organizations utilizing them. However, the workflows are similar. Input of raw assessment output is combined and transformed into a deliverable.

- Professional Reporting Solution — Similar to the two options above, professional reporting solutions are supported products that are meant to aid in reporting and reading out the results of security assessment and testing activity.

- Readout Meeting — Typically, the output or deliverable produced from one of the above solutions is the subject of a readout meeting. This is an in-person or virtual opportunity to provide a summary, explain the results, and provide time for the recipients of the report to ask questions and work with the team that provided the assessment or test.

Benefits and Challenges of the Reporting Phase of Penetration Testing

Manual Hand-Jamming

“Copy-pasta,” “carpal tunnel-maker,” and “hacker’s despair!” are just a few of the terms that come to mind when the idea of manually taking data from security testing activity and entering into a report deliverable is brought up. One reason folks use this method is because they think, : “It’s cheap, it’s easy, it’s fast, and I can knock it out.” The problem is that this method can be error prone. Mistakes in copying and pasting, human error, and the monotony of having a skilled professional working through formatting issues are some of the challenges faced by organizations that cook with the copy and paste method for reporting. It can take valuable time away from technical assessment work and is frustrating. Also, consolidating data and collaborating can be difficult when reporting manually.

Some of the benefits are that people usually already know how to use word processing software and spreadsheet products and can rapidly begin manually inputting data into some master template. This paradigm is one that makes folks groan the most.

DIY Reporting Solution

For organizations that have the in-house skill set, this can be a way to elevate the manual reporting process into one that is more refined. These teams can also tailor the needs of the reporting platform to their specific workflows and take away a lot of the arduous portions of reporting and begin to automate.

However, many teams begin to realize that supporting the in-house platform can become a full time job for one or more employees. Many times the in-house staff that support the reporting solution have many other duties, and the reporting solution may become yet another “other duties as assigned.” Bugs, hot fixes, and issues that crop up become a constant whack-a-mole scenario. Supporting the internal reporting solution can cost more in time than organizations anticipate when focusing on only dollars and cents.

Open-Source Reporting Solution

Using community supported reporting platforms usually fit the budgetary requirements (e.g. free) that many organizations face when tackling the problem of how to deliver the work products of assessment and testing activity. These solutions are also customizable, provided you have the in-house skill set in your staff that can modify the solution. It is easier to start from an existing application, versus building one from scratch, and many of these solutions fit common, industry accepted methodologies when it comes to the work flows for security testing and reporting.

Some of the drawbacks are that, similar to DIY, the investment in operationally supporting the platform is left to the organization’s staff and possibly some community members that can respond when they’re free. These open source platforms were typically DIY solutions first, developed for a specific tester or set of testers, who then decided to open source them to benefit the security community at large. These can fit the bill for snap-shot-in-time, project-based assessments, which often fit into well-defined (yet opinionated) expectations for testing workflows.

However, these solutions aren’t usually built to scale well and can still eat up resources for support. These solutions work well with well-defined, traditional and expected types of testing. When it comes time to evolve to newer types of testing or to scale beyond snapshot-in-time assessments, a significant amount of effort may need to be expended to evolve the open source platform as well.

Professional Reporting Solutions

A professional or commercial reporting platform is typically adopted by organizations after they have tried the previously mentioned solutions. These applications are often focused on reporting workflows that are well defined, industry accepted practices. Some also incorporate the functionality to expand and evolve and can help with creating new types of security assessments and testing engagements.

One of the largest benefits of purchasing a professional solution is outsourcing the support aspect. Reporting platform providers that have a staff of people for support, as well as development efforts, free up the technical staff to perform their core duties, rather than worry about supporting or enhancing the reporting platform. The more mature and robust professional reporting platform companies demonstrate a commitment to the success of the teams and organizations that are users of their platform. The platforms usually take into account the requests and needs of their users and adopt new features to enhance the platform.

A limitation that comes with purchasing a professional report platform is that very specific uses or workflows may need to be adjusted based on the expected use of the platform. Further, changes and enhancements may not happen as rapidly as a DIY solution, because the features are tested and released carefully, based on a schedule, rather than ad-hoc or instantly.

Benefits and Challenges of the Read-Out Phase of Penetration Testing

The most notable difference between report read-out methods is dependent on the deliverable. In many cases, the organization is sent a PDF document, perhaps with an accompanying Excel spreadsheet, detailing the results of the findings. During a readout, the assessors will present the document and work through it, and the recipients will have an opportunity to discuss and ask questions. Real-time collaboration on the content (e.g. findings within the report) is not possible.

When providers of security services, either internal teams or in a consultative fashion, provide the capability to interact with the data of an assessment during or after the assessment is complete, the readout can be more collaborative. Consuming the findings from the assessment and testing and narrative digitally via an interactive platform can make the results much more actionable than receiving them through a static, text-based document.

Regardless of whether the deliverable is a static document(s) or digital access via a portal-esque delivery mechanism, the success of the read-out is in how well the results are proven and explained and the remediation steps outlined. When the read-out is clear, organizations understand what’s wrong and the path to fix it, which puts them on the right track to raise their security posture, proving the assessment and testing efforts successful.

Use PlexTrac Every Step of the Way

The PlexTrac platform was originally built by a penetration tester for penetration testers to ensure that the results of security assessment and testing activity were actionable, clear, and helped security teams prioritize and triage the flaws revealed by penetration testing. The platform has since evolved into a full-spectrum security assessment and testing life cycle management and report collaboration system. Whether it’s red teaming, pentesting, vulnerability assessments, purple teaming, or compliance testing, the PlexTrac platform makes managing and acting on the results of security testing effective and efficient.

PlexTrac saves pentest teams time and resources while also ensuring that organizations receive the maximum value out of their offensive assessments and testing, whether outsourcing or conducting them internally.